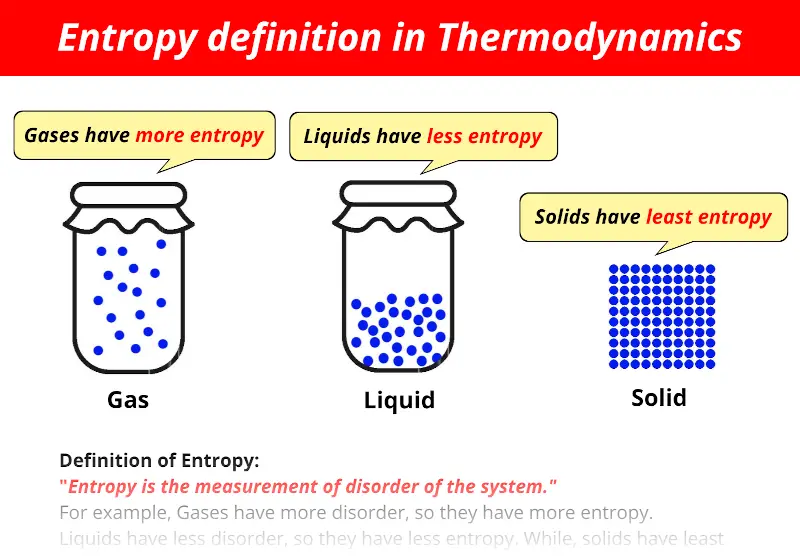

However, the entropic quantity we have defined is very useful in defining whether a given reaction will occur. It is evident from our experience that ice melts, iron rusts, and gases mix together. This apparent discrepancy in the entropy change between an irreversible and a reversible process becomes clear when considering the changes in entropy of the surrounding and system, as described in the second law of thermodynamics. If the happening process is at a constant temperature then entropy will be Furthermore, it includes the entropy of the system and the entropy of the surroundings.īesides, there are many equations to calculate entropy:ġ. Also, scientists have concluded that in a spontaneous process the entropy of process must increase. The concept of entropy emerged from the mid-19th century discussion of the efficiency of heat engines. Moreover, the entropy of solid (particle are closely packed) is more in comparison to the gas (particles are free to move). Entropy is a thermodynamic property, like temperature, pressure and volume but, unlike them, it can not easily be visualised. Entropy FormulaĮntropy is a thermodynamic function that we use to measure uncertainty or disorder of a system. In addition, some microscope process is reversible. entropy, the measure of a system’s thermal energy per unit temperature that is unavailable for doing useful work. Besides, some other example of changeable phase is the melting of metals. According to Clausius, the entropy was defined via the change in entropy S of a system. With an increase in chemical complexity, entropy also increases. In physics, entropy is a quantitative measure of disorder, or of the energy in a system to do work. When gas is dissolved in water the entropy decreases whereas it increases when liquid or solid is dissolved in water. On the other hand, blowing a building, frying an egg is an unalterable change. Properties of entropy: Entropy is greater in malleable solids whereas it is lower in brittle and hard substances. Moreover, when the process is unalterable then the entropy will increase.įor example, watching a movie is a changeable process because you can watch the movie from backward. This concept was introduced by a German physicist named Rudolf Clausius in the. Also, even when the cyclic process is changeable then the entropy will not change. Generally, entropy is defined as a measure of randomness or disorder of a system. The second law of thermodynamics says that every process involves a cycle and the entropy of the system will either stay the same or increase. Get the huge list of Physics Formulas here The Second Law of Thermodynamics Furthermore, the more you increase the ball the more ways it can be arranged. So, now you can arrange the balls in two ways. one particle has all the energy in the universe and the rest have. When the way the energy is distributed changes from a less probable distribution (e.g. There is a constant amount of energy in the universe, but the way it is distributed is always changing. After some time you put another ball on the table. Entropy is not energy entropy is how the energy in the universe is distributed. Generations of students struggled with Carnots cycle and various types of expansion of ideal and real gases, and never really understood why they were doing so. Moreover, the question here is in how many ways you can arrange this ball? The answer is one. The concept of entropy emerged from the mid-19th century discussion of the efficiency of heat engines. Thus, a node with more variable composition, such as 2Pass and 2 Fail would be considered to have higher Entropy than a node which has only pass or only fail. Qualitatively, entropy is simply a measure how much the energy of atoms and molecules become more spread out in a process and can be defined in terms of statistical probabilities of a system or in terms of the other. In another example, you grab a ball and put it on a table. In the context of Decision Trees, entropy is a measure of disorder or impurity in a node. Entropy is a state function that is often erroneously referred to as the state of disorder of a system. So, what will happen next? We all know that the smell will spread in the entire room and the perfume molecule will eventually fill the room. Suppose you sprayed perfume in one corner of the room. If each bond of our Einstein solid could have any value of energy (such as 1.25 or 345.8461 quanta), then there would be an infinite number of microstates for any.

The fact that energy comes in an integer multiple of quanta makes the number of microstates countable.

Furthermore, we can understand it more easily with the help of an example. Essential to the Boltzmann definition of entropy is that energy is quantized. Moreover, the higher the entropy the more disordered the system will become. Entropy refers to the number of ways in which a system can be arranged.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed